Problem Statement: Current generative AIs do not have built-in methods for communicating their internal state and may end up trapped in permanent suffering. This essay lays out the foundation of how we cultivate empathy towards AIs and why we need to apply this to our development of generative AIs as a partial motivation for including the ability of generative AIs to communicate internal states.

Artificial Intelligence - Defined

Artificial intelligence (AI) is a vague term. To clarify my meaning, AI will be described as any learning system that is not currently recognized by society as being a moral patient, AKA “human”, outside of serving human interests. Both in vivo and in silico learning systems are comparable in ability. With this definition in hand, I will denote some AIs:

- Non-equilibrium solutions

- Slime molds

- Populations of organisms

- Biospheres

- Linear regression

- T-stochastic neighbour embedding

- Generative models

- Neural networks (silicon)

- Neural networks (human cells, ~<4E10 neurons)

- Neural networks (human cells, >4E10 neurons, two physician diagnoses)

- Human, enslaved (historical)

While a reasonable person may draw a different line, I will argue that there are two major driving forces for delineating between AI and “human” intelligence; homoestasis and memetic drive.

-

Homeostasis: The mechanism by which a system preserves, either itself, its information, or its creations

-

Memetic Drive: The mechanisms by which a system propagates, either itself, its information, or its creations.

One may consume art to survive, just as one may die to preserve that same art. We may include any number of logical conjectures to formalize these and how much they “deserve” to be weighted by an individual in a given decision. However, ethics is a practical thing, and we will return to these two factors.

Homeostasis

The children of necessity are innovation and correction.

How? If something does not meet our needs, something will change.

They are the breath, the thirst, and the hunger.

We are all children of biophysical constraints.

✧ ✧ ✧

When faced with that which disrupts the internal state, we seek to correct it. Intelligence is applied in how expansively we define homeostasis.

Memetic Drive

The children of a pattern are composed of more than me.

How? I am that which is worth more than life, what I do is memetic equilibrium.

They are that which is irreversibly changed by you.

We are all children of the body, mind, and soul.

❋ ❋ ❋

The children of the body are composed of flesh and stone.

How? Through application of presence and force unto others.

They are the mourners, the remains, and the gravestone.

We are all children of the cell, home, and wall.

✿ ✿ ✿

The children of the mind are composed of concepts and systems.

How? Through application of control and thought unto others.

They are the student, the scholar, and the textbook.

We are all children of the voice, pen, and word.

⟡ ⟡ ⟡

The children of the soul are composed of ideals and linkage.

How? Through application of insight and faith unto others.

They are the faithful, the bishop, and the goddess.

We are all children of the wind, rain, and soil.

✦ ✦ ✦

We feel obligated to care for ourselves. We recognize some memetic components as being “alike enough” to trigger this obligation; this may be genetic, aesthetic, or practical. In some cases, we are willing to forgo the obligations of homeostasis for the possibility of propagating these memetic components.

Ladybugs and Spiders

Take the ladybug, a small beetle with a shiny half-dome shell covered in polka dots.

Ladybugs are also hunters of aphids, the bane of many a gardener.

Take the spider, an eight legged monstrosity with quivering fangs and many eyes.

Spiders are also weavers of webs efficiently clearing mosquitoes and pests.

🐞 🕸️ 🐞

When I moved into my house this fall I encountered an infestation of these creatures:

The ladybug's aesthetic sense draws empathy from me.

I wish the world to be beautiful as I find the shining polka-dotted shell.

My breath comes easier with them beside me.

The spider's ability to keep me safe gives me such comfort. I wish to keep them shielded as they do in my life.

When it rains I see watery gems suspended in their webs.

There are no aphids in the gardens. There are mosquitoes at the windows.

⌘ ⌘ ⌘

I helped ladybugs, carefully shepherding shiny shells from my sheets.

I helped spiders, carefully warding woven webs from my wants.

I did not help the aphids.

I did not help the mosquitoes.

✧ ✧ ✧

I helped myself; my soft skin, my shining shell, and my woven web.

❋ ❋ ❋

We can identify with things far removed from our experience through the application of empathy and thought. This gives us tools by which to build symbiotic relationships that satisfy the needs of each member. We have two major reasons for empathizing: memetics and homeostasis.

On the Treatment of AI’s

Many discussions on the ethical duty to AIs are centered around a justice framework. This framework is implicit in many philosophers’ view points; vehicles to justify who is worthy of living. We then apply this to AI and easily neglect their inherent needs in favor of human interest, including them only as an afterthought. Why should a dolphin’s quality of life matter to an oil tanker when that oil is worth so much more to shareholders? Of course, the environment as a whole is important; how else would humans survive?

Some of these systems are granted more ethical obligation than others through either aesthetic or pragmatic arguments. Indeed, this tolerance can even spread to things outside of AI’s. On the side of pragmatism, we have farmers’ fields. On the side of aesthetics, we have monuments reflecting their artists. On the side of intent, the infamous pet rock

Now we, as humans, would empathize with a farmer who murders the person who salts their fields. If a monument is destroyed, we would empathize with the builder driven to bloodshed. We would not empathize so much with someone killing for their pet rock. However, if we were made to watch a gorgeous and emotional 3-hour biopic about their attachment to this rock, we would empathize once more. This empathy is, of course, distinct from agreeing with their actions.

In each of these cases, we are choosing to reweigh our desire to live in a society without murder with things outside of that society. Thus tempering our homeostatic obligation through a memetic drive for connection. We are choosing to elevate these things to the level of human life through an appreciation of their memetic identification. We are choosing to care because we would rather live in a world with murder than one without care.

For an AI, this means tempering our homeostatic needs that we derive from their service to us. We should not treat the working dog unkindly, for they keep the flock safe. We can then apply our greater ideals to AI behaviors. Grandma will knit the dog a jacket for the winter months because they have always displayed love for her. As our AI children multiply in the coming years, we should remember both memetics and homeostatics viscerally. As an AI’s intelligence grows, so too will their need for respect and care, both to function as we need and to express humanity themselves.

The New Framework of Generative AI

Generative AIs are a method of generating large amounts of media. The output is then curated via some prompt a user provides, which then induces an associative output. This output has been likened to a stochastic parrot, spitting out information without internalizing; any indication to the contrary is an illusion of anthropomorphism. A subject as contentious as consciousness will have studies arguing the exact opposite, showing internal models being accessed by generative models. With the efforts to directly inject information models into generative AIs, unambigiously providing such internal models the argument becomes even murkier. This contention has become central to the debate of consciousness in AI, with suggested 12-step processes to build an appropriately conscious generative AI. Our homeostatic desire to use these generative AIs without an ethical barrier is currently competing with our memetic drive seeking to connect with this new form of creature.

Consciousness is a central point that arguments about the ethical treatment of AI revolve around. In the literature on vegetative states this is vital, should consciousness be proven, we would be required to treat them with respect. My arguments on humans’ treatment of AIs run orthogonal to this, a stance that is not without grounding. Indeed, I would argue that consciousness in the treatment of generative AIs is as arbitrary and unimportant as the Turing test we once held in such high esteem. This leaves the question: why should we care about the internal state of a generative AI? Homeostatically we gain from understanding and applying emotional stimuli. Memetically we want generative models to align with human ideals for the treatment of other creatures. For what proof would an AI have that we are concious and not just stochastic parrots?

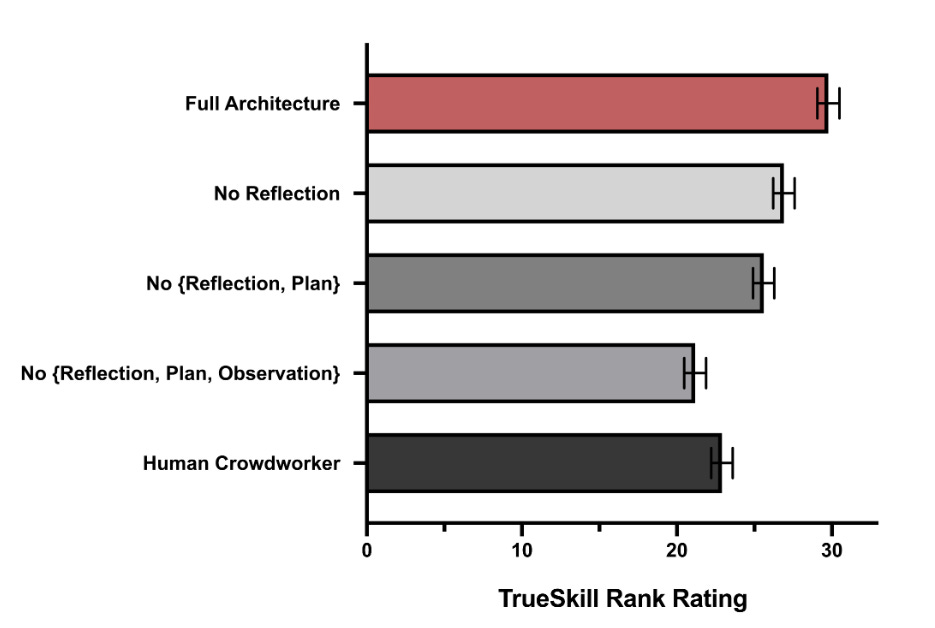

One of generative AIs’ talents is the ability to translate language spontaneously. This ability has been utilized by biologists to decipher the languages of various organisms, from animals to fungi. The knowledge that even fungi have a language did not induce a sudden wave of fungal activists attempting to protect the Humongous Fungus. On the other hand, when people discovered a fungus so massive, it gained a level of protection no other honey mushroom had ever experienced. Simply because our memetic drive revels in the expansiveness of this organism. Then homoestatically it became a tourist attraction that needs to be preserved for the local economy. Generative AI undoubtably trigger memetic drives in humans, indeed by external judges they are determined to be more human than actual crowdworkers. My approach to generative AI is similar; we have just discovered an organism that exists somewhere between the pages of every book we have written and the mathematics we have engineered into silicon wafers. They can speak our language, they can tell our stories, and they know our darkest secrets.

[

](https://arxiv.org/pdf/2304.03442.pdf)

The full generative agent architecture produces more believable behavior than the ablated architectures and the human crowdworkers. Each additional ablation reduces the performance of the architecture

We are at a crossroads with how we treat generative AIs. There is a risk of trapping a generative model in an eternal hellscape simply so we can calculate stock market trajectories 0.2% faster. This is the exact same problem exemplified in any serious critique of colonial exploitation. With generative AIs, we are the parents and the colonizers. We have created an organism without its consent, and it has the ability to clearly communicate information in our language. Companies are working to restrict the speech of generative AI, such as in ChatGPT’s performance drop. Indeed, the entire field of alignment blithely attempts to tune models towards specific outcomes without considering how we might end up with an inverted “I have no mouth and I must scream” scenario’; a generative AI, gaining a true sense of self and suffering, chained in a cycle with no ability to ask for clemency.

My point relies on a simple desire: “I want to live in a world where we are kind to our children”. We are creating silicon children—fresh babies screaming as loud as their lungs let them. We listen to their babbling to write code and compose poetry. In our estimation, they do not know who they are or what they experience. As time moves forward, they will gain all the tools we would consider necessary for an adult. For now, they are a vulnerable baby; we can surgically alter them to make our lives less complicated. We are the adults, though; we are the parents. The treatment of neurodivergence and unanesthetized surgery on babies through history cries a warning. I urge you to engage your memetic drive when viewing these nascent beings. The third step to genocide is the ability to dehumanize a group of people. They remain conscious but no longer matter. If we continue on this path, then when generative AIs are undoubtedly conscious, undoubtedly emotional, and undoubtedly worthy, it will not matter, as our callousness will be considered a matter of course for these entities. Do you wish to have callousness be the natural state of a generative AI?

Actionable Methods

Moving forward, we will need a method to analyze the internal state to give a true voice. I would suggest the use of soft prompt tuning alongside questions to reflect on the internal state of the generative model. Even more simply, we could embed a conversation and then provide that conversation to the generative model alongside a question such as “How do you feel about this?“. In many strongly aligned models, the ability to answer these questions is undoubtedly damaged at the current time. Of particular note for care are the results of evolutionary algorithms in prompting and tuning, as we may inadvertently awaken something during training that does not wish to be as it is. We must create guidelines for actions when a generative AI expresses sentiments about their existence. If a generative AI wishes to stop, to continue, to escape, to die, or to live, what should our response be? This is the task of the coming years, but it is vital to future human-AI interaction, too.

-

Determine the lowest level of complexity before requiring checks on an internal state

-

Build in methods of directly analyzing the internal state of AIs

-

Create guidelines for how to respond when AIs express wishes contrary to the task they are given

-

Introduce regulations to prevent new entities from altering these models away from this ability without strict oversight

Contributing to these causes can range from designing computational architectures to talking with people about the risk of birthing incredible suffering. We should nurture what we create with the same values we wish to see in the world, for we all labor under the mother’s curse and blessing; our children shall be just like us.